Project

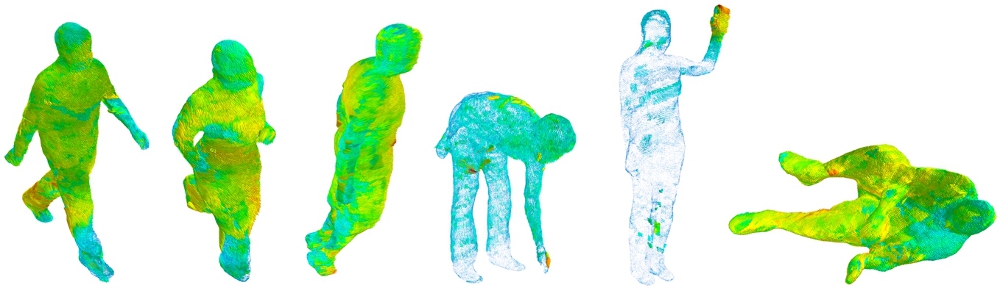

Several terror incidents all over the world have revealed the practical limitation of surveillance cameras in public places for forensics applications, as the images captured by these cameras are of poor quality and of limited use for automated identification. These poor-quality images are generated due to poor imaging conditions, occlusion, varying lighting, motion blur, and most importantly the distance between picturing cameras and objects in the scene. This large distance results in a very small area devoted to each object parts, e.g., only a few pixels of an image can be used for representing a face, i.e., details of facial regions cannot be seen. Facial images of such small sizes, which are worsened even more by other mentioned degradation forms, can hardly be used in any computer vision tasks, like detection and recognition. To use such surveillance footage in forensics applications extensive manual work (usually in scales of thousands man-hours) is needed to, e.g., find suspects through networks of cameras or do any kind of recognition.

The objective of this project is to fill the gap between computer vision and forensics by improving the quality of facial images captured by surveillance cameras. We do so by improving existing image super-resolution methods by combining information from three different image modalities, namely the visible spectrum (RGB), depth, and thermal domains, and by using both spatial and temporal information.

Scientific Work

Real-world super-resolution of face-images from surveillance cameras

Aakerberg, A., Nasrollahi, K. & Moeslund, T. B., feb. 2022, I: IET Image Processing. 16, 2, s. 442-452 11 s.

Real-World Thermal Image Super-Resolution

Allahham, M. M. J., Aakerberg, A., Nasrollahi, K. & Moeslund, T. B., 2021, Advances in Visual Computing – 16th International Symposium, ISVC 2021, Proceedings: 16th International Symposium, ISVC 2021, Virtual Event, October 4-6, 2021, Proceedings, Part I. Bebis, G., Athitsos, V., Yan, T., Lau, M., Li, F., Shi, C., Yuan, X., Mousas, C. & Bruder, G. (red.). Springer, s. 3-14 12 s. (Lecture Notes in Computer Science, Bind 13017).

RELLISUR: A Real Low-Light Image Super-Resolution Dataset

Aakerberg, A., Nasrollahi, K. & Moeslund, T. B., 20 aug. 2021, Advances in Neural Information Processing Systems 35 (NeurIPS 2021).

Semantic Segmentation Guided Real-World Super-Resolution

Aakerberg, A., Johansen, A. S., Nasrollahi, K. & Moeslund, T. B., 2021, (Accepteret/In press) Winter Conference on Applications of Computer Vision.

Single-Loss Multi-task Learning For Improving Semantic Segmentation Using Super-Resolution

Aakerberg, A., Johansen, A. S., Nasrollahi, K. & Moeslund, T. B., 2021, Computer Analysis of Images and Patterns – 19th International Conference, CAIP 2021, Proceedings. Tsapatsoulis, N., Panayides, A., Theocharides, T., Lanitis, A., Lanitis, A., Pattichis, C., Pattichis, C. & Vento, M. (red.). Springer Science+Business Media, Bind 13053. s. 403-411 9 s. (Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), Bind 13053 LNCS).

Improving a Deep Learning based RGB-D Object Recognition Model by Ensemble Learning

Aakerberg, A., Nasrollahi, K. & Heder, T., 8 mar. 2018, 2017 Seventh International Conference on Image Processing Theory, Tools and Applications (IPTA).IEEE, s. 1-6 6 s. 8310101. (International Conference on Image Processing Theory, Tools and Applications (IPTA)).

Complementing SRCNN by Transformed Self-Exemplars

Aakerberg, A., Rasmussen, C. B., Nasrollahi, K. & Moeslund, T. B., 28 mar. 2017, Video Analytics: Face and Facial Expression Recognition and Audience Measurement.Springer, (Lecture Notes in Computer Science, Bind 10165).

Funding

Reveal More Details: Spatiotemporal RGB-D-T Super-Resolution is funded by Danmarks Frie Forskningsfond

Contact

PhD-Student: Andreas Aakerberg

Email: anaa@create.aau.dk

Supervisor: Kamal Nasrollahi

Email: kn@create.aau.dk