Project

In recent years analysis of human behavior and action recognition has been a growing topic within the field of video surveillance, and with the growing amount of surveillance footage, intelligent surveillance systems are expected to track the behavior of individuals within a given context.

Being able to track individuals and detect abnormal behavior is a powerful tool for avoiding dangerous situations such as medical emergencies, suicidal behavior, violent behavior and similar criminal activities. However accurately tracking and analysis the behavior of agents within a given context relies heavily on accurately and robustly detecting objects of interest within a given context, and the quality of the detector will have cascading effect on subsequent analyses.

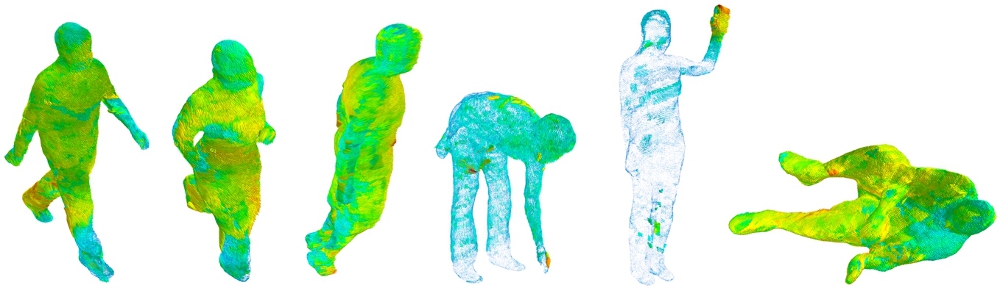

For surveillance it is desirable to have a system that is robust to many changes within the context with as minimal a cost to accuracy as possible. The choice of camera sensor is therefore a very important factor; RGB-cameras produce very information dense images that are really good for detecting and recognizing different objects, but are very sensitive to changes in lighting conditions. On the other hand, thermal cameras provide video data that is very robust to changes in lighting conditions, however they are lower level of detail. Depth-sensing cameras introduces a further means for localization, by providing data on the 3rd dimension.

The aim of this project is to leverage the advancements in object detection across different modalities to improve generic object detection across the singular modalities and utilizing internal information sharing techniques to enhance detection through complimentary sensing.

Funding

This PhD project is directly funded as part of the Milestone Research Programme at AAU (MRPA).

Contact

PhD-Fellow: Anders S. Johansen

Email: asjo@create.aau.dk

Supervisor: Kamal Nasrollahi

Email: kn@create.aau.dk