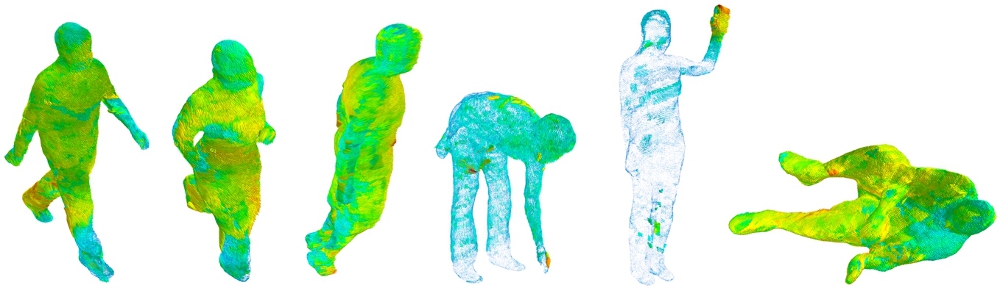

We present the first publicly available database, ’Multimodal Intensity Pain (MIntPAIN)’ database, for RGBDT pain level recognition in sequences. We provide baseline results including 5 pain levels recognition by analyzing independent visual modalities and their fusion with deep CNN and LSTM models.

The database was captured by giving electrical muscle pain stimulation to the subjects. It has 20 subjects. Each subject exhibited two trials during data capturing session and each trial has 40 sweeps of pain stimulation. In each sweeps we captured two data: one for no pain (Label0) and the other one for pain (Label1-Label4). As a whole each trial has 80 folders from 40 sweeps (some sweeps are missing for some subjects). Each of the 80 folders in the trial folders contains three folders for RGB, Depth and thermal video frames of one stimulation. A synchronization of frames between all these modalities along with some other supporting script are provided with the database and described below.

Citation:

1. Haque M. A. et al. (2018) Deep Multimodal Pain Recognition: A Database and Comparison of Spatio-Temporal Visual Modalities. In: Proc. of the 13th IEEE Conf. on Automatic Face and Gesture Recognition (FG2018), Xi’an, China

2. Bellantonio M. et al. (2017) Spatio-temporal Pain Recognition in CNN-Based Super-Resolved Facial Images. In: Nasrollahi K. et al. (eds) Video Analytics. Face and Facial Expression Recognition and Audience Measurement. FFER 2016, VAAM 2016. Lecture Notes in Computer Science, vol 10165. Springer, Cham

Download Instructions:

An EULA (link is here) must be signed by a person with a permanent position at an academic institute. Please send the signed EULA to (anaa[at]create.aau.dk (preferred), and/or kn[at]create.aau.dk, and/or tbm[at]create.aau.dk, and/or shb[at]icv.tuit.ut.ee, and/or sergio[at]maia.ub.es). You will shortly be contacted with a link of the database.

Note: Please do not proceed to use the database before reading the readme description provided with the database.